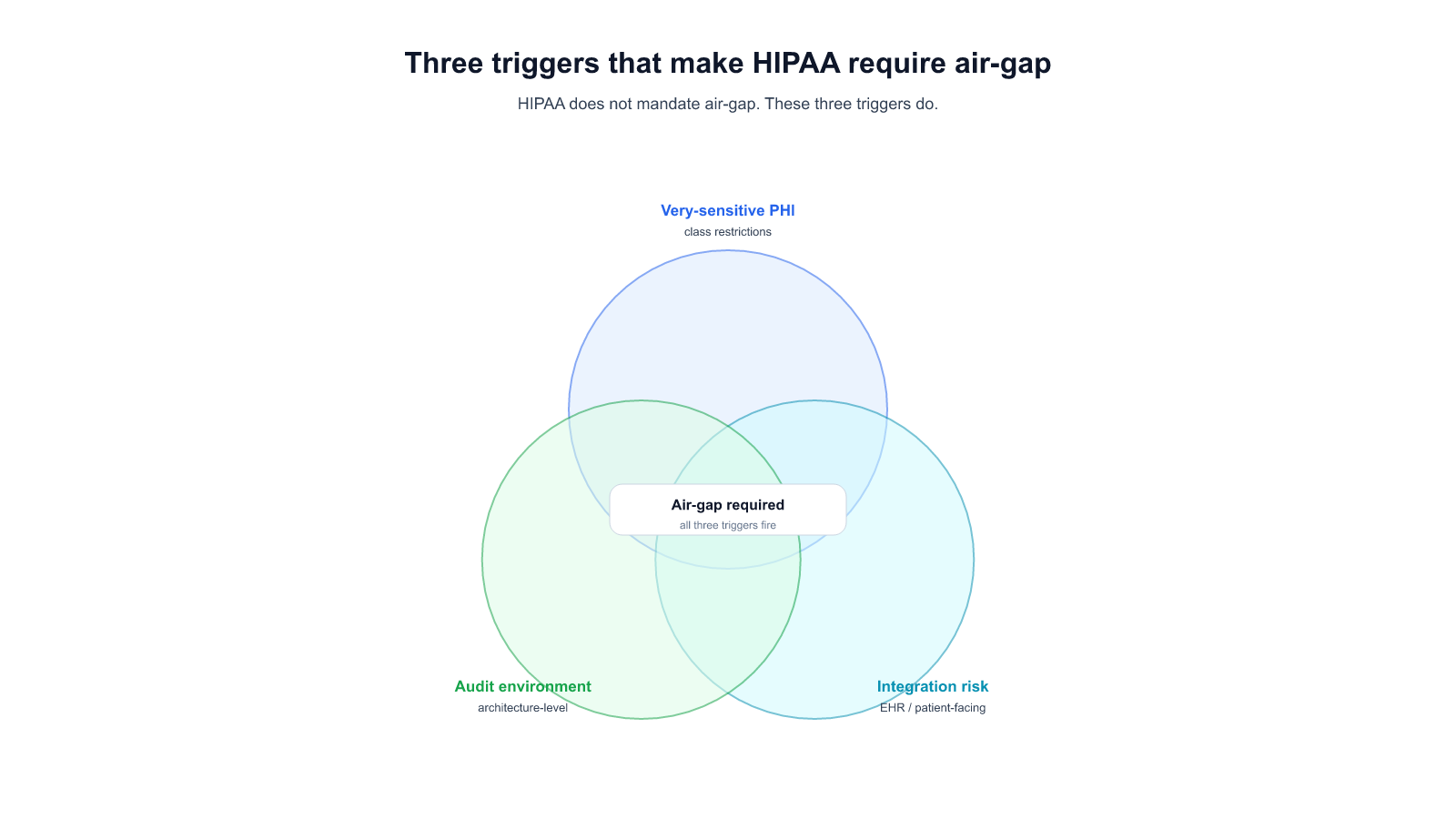

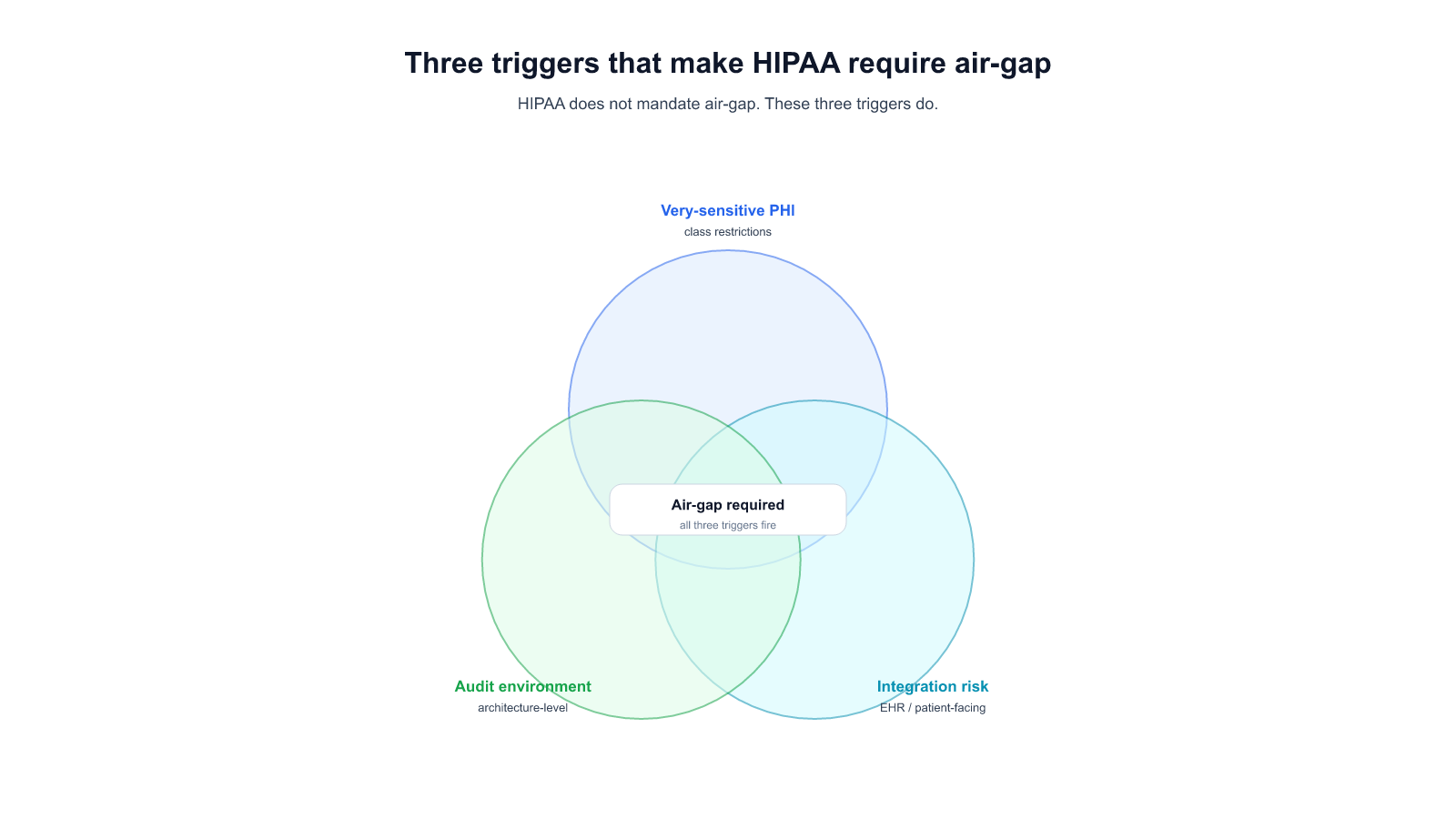

HIPAA is outcome-focused; BAA-encrypted SaaS satisfies most covered-entity workloads. "HIPAA requires air-gap" is shorthand for one of three specific triggers — sensitive PHI classes with re-disclosure restrictions, integration-pattern risk, or architecture-auditing environments. Decision framework included.

Most HIPAA-covered entities evaluating AI for clinical or administrative work discover in the first legal review that HIPAA doesn't actually require air-gap deployment. The Security Rule sets technical, administrative, and physical safeguards; a BAA-encrypted SaaS deployment with documented access controls and encryption in transit satisfies most of the rule for most workloads. That's the starting point.

Then a specific workload comes up — usually something involving a particularly sensitive class of PHI, or an integration pattern where risk exposure to the SaaS vendor is itself the problem — and the compliance conversation changes. The three scenarios below are the most common triggers we've seen push a covered entity from hybrid deployment (encrypted SaaS + BAA) to full air-gap.

This post is the buyer-side version of the decision. Engineering-depth on each scenario sits in [[034 — Self-Hosted LLM: The Operator's Checklist for Enterprise Production]] and [[027 — Engineer-Audience Air-Gap Real Incidents]]; the three layers of "private" this post uses as a framing sit in [[032 — Private AI Assistant: What "Private" Actually Means When You're Regulated]]; the full pillar guide is [[035 — The Complete Guide to On-Premise LLM Deployment for Regulated Enterprises]].

The Security Rule is outcome-focused for most of its scope. It requires that a covered entity implement reasonable and appropriate safeguards; it does not prescribe a specific architecture. Encryption in transit, encryption at rest, access controls per user, audit logging, incident response procedures, risk analysis documentation — these are the hows a covered entity can point to when OCR audits. A SaaS vendor that provides these under a signed BAA usually passes for most use cases.

Where the Security Rule gets architecturally prescriptive is narrow: the "physical safeguards" section requires specific attention to facility access controls and workstation security, but the clearest architectural requirement is for the downstream of a specific incident disclosure, not for AI deployment in general.

So "HIPAA requires air-gap" is almost always a shorthand for something else — a specific risk scenario that the buyer's compliance team has flagged. The rest of this post covers the three scenarios we've watched turn into that shorthand.

Some categories of PHI carry downstream-disclosure restrictions that go beyond the Security Rule baseline. Substance-use disorder records covered by 42 CFR Part 2, some psychiatric and psychotherapy notes, HIV and reproductive-health records under specific state statutes — these aren't just PHI, they're PHI with additional contractual and regulatory obligations on every party that handles them.

For a covered entity handling these categories, the risk calculus shifts. A BAA-encrypted SaaS deployment could technically cover the handling — but a vendor-side incident involving these categories is not merely a reportable breach; it can trigger patient-notification obligations under state law, Part 2 disclosure tracking requirements, and in some cases revocation of the covered entity's clinical research authorization. The cost of that incident is disproportionately higher than a standard Security Rule breach.

When the Chief Privacy Officer calculates the expected value of "we accept some vendor-side incident risk under BAA" against "we air-gap this specific workload," the air-gap choice often wins for the subset of the corpus containing these PHI classes. It's not a blanket decision; it's a classifier-routed one (see the hybrid pattern in [[035]] §7).

A covered entity deploys an AI coworker that grounds on patient records — the retrieval corpus is the EHR, essentially. In this architecture, the AI's retrieval layer reads the full patient data graph to answer any query. Even with the vendor's BAA and encryption in transit, the vendor's operational access to retrieval logs and query traces is equivalent to giving a third party structured read access to patient records.

Many Compliance Officers we've watched evaluate this architecture classify it as unacceptable regardless of the vendor's posture. The issue isn't the encryption story; it's the pattern of what a support-team debugging session sees. A support engineer looking at a retrieval trace to diagnose a quality issue is, in effect, reading patient records.

For this trigger, air-gap isn't required by a specific regulation — it's required by the Privacy Officer's interpretation of the minimum-necessary principle under 45 CFR 164.502(b), extended to vendor operational access. Deployment Pattern A (fully on-prem) from [[035]] §7 satisfies this cleanly; Pattern B (BYOC) can satisfy it only with a specific control-plane telemetry configuration that vendors do not ship as default.

Certain auditors and institutional review bodies evaluate architecture rather than controls. Academic medical centers with federally-funded research, some VA and DoD healthcare workloads, and specific state regulators (notably some in sectors where national-security overlay applies) ask a different question during audit: "Show me the physical boundary of this data."

This question does not have a clean answer in a SaaS or BYOC topology. The deployment description becomes "the data is encrypted and our BAA binds the vendor," which is a controls answer, not an architecture answer. Auditors operating in the architecture-evaluation mode mark this as non-compliant — not because the controls are weak, but because the frame of the question doesn't match the frame of the answer.

For covered entities operating under this auditor posture, air-gap is the only deployment that matches the auditor's frame. The decision is less about incident risk and more about passing the next audit cycle without a finding that requires re-architecting the system.

Three questions, in order:

If all three answers are no, HIPAA does not require air-gap for that workload. A BAA-encrypted SaaS deployment or a BYOC hybrid satisfies the rule, costs materially less over three years (see [[035]] §4 for the TCO model), and preserves your platform team's capacity for other initiatives.

If one or more are yes for a specific subset of the workload, Pattern C (hybrid with audited boundary) from [[035]] §7 is usually the right answer — classifier-routed, air-gap only for the subset that triggers the bar, the rest on the cheaper and faster-to-upgrade tier.

If you're evaluating HIPAA-aligned AI deployment for a specific workload and want to work through which trigger (if any) applies, we do these conversations — no pitch deck, no slides, just the compliance framing and the decision tree.

Start a conversation — share your PHI class breakdown, integration pattern, and auditor posture. We'll come back with where on the 3 triggers we think you actually sit.

Start a 14-day trial — run Alli Coworker against a representative de-identified slice of your corpus on your own infrastructure.

Lorem ipsum dolor sit amet, consectetur adipiscing Aliquam pellentesque arcu sed felis maximus

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Stay updated with the latest in AI advancements, insights, and stories.