Self-hosted LLM production is 7 operational surfaces around the serving runtime, not a serving-runtime comparison. Model loading, observability, upgrade lifecycle, auth, eval loop, failure modes — what goes wrong first, and the minimum-viable bar for each.

If you can run vllm serve meta-llama/Llama-3.1-70B-Instruct on a single node and hit a /v1/chat/completions endpoint, you have a demo. If you run that in production inside an enterprise perimeter — with real users, real documents, a real failure surface — you have an infrastructure project. Those two things are easy to confuse, and the distance between them is where most enterprise LLM deployments spend the first 90 days they didn't plan for.

This post is the checklist we wish every platform team reading the docs had started with. It's organized by what goes wrong first — model loading (day 1), serving config (day 3-7), observability (week 2 when the first postmortem happens), upgrades (month 2 when the first model refresh arrives), auth and admission control (before your security team audits you), the eval loop (when quality regressions start going unnoticed), and failure modes (forever).

Nothing in here is controversial at the level of individual tools. What's usually missed is the order in which these surfaces get production-grade, because demos don't exercise them. Enterprise operations do.

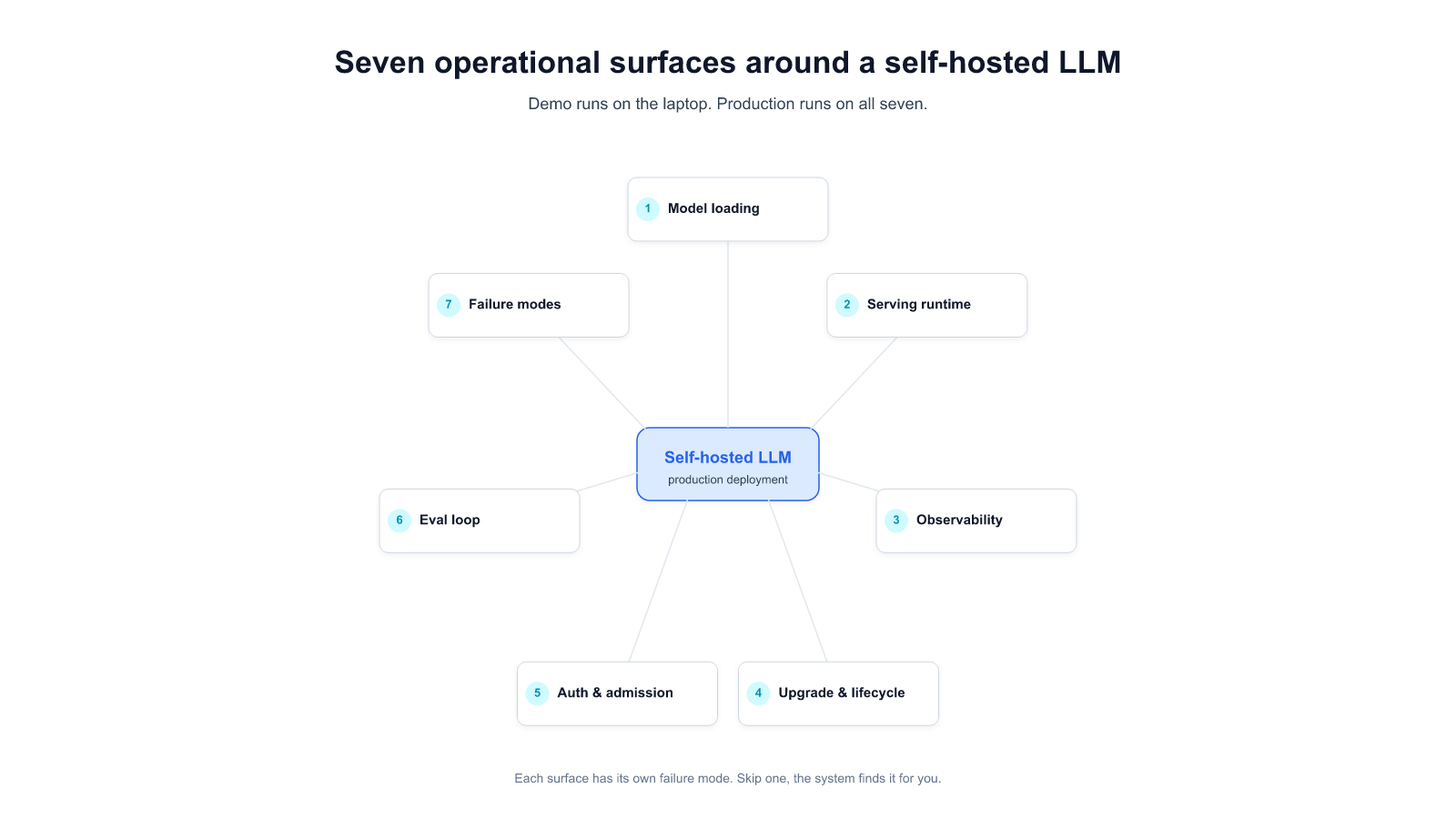

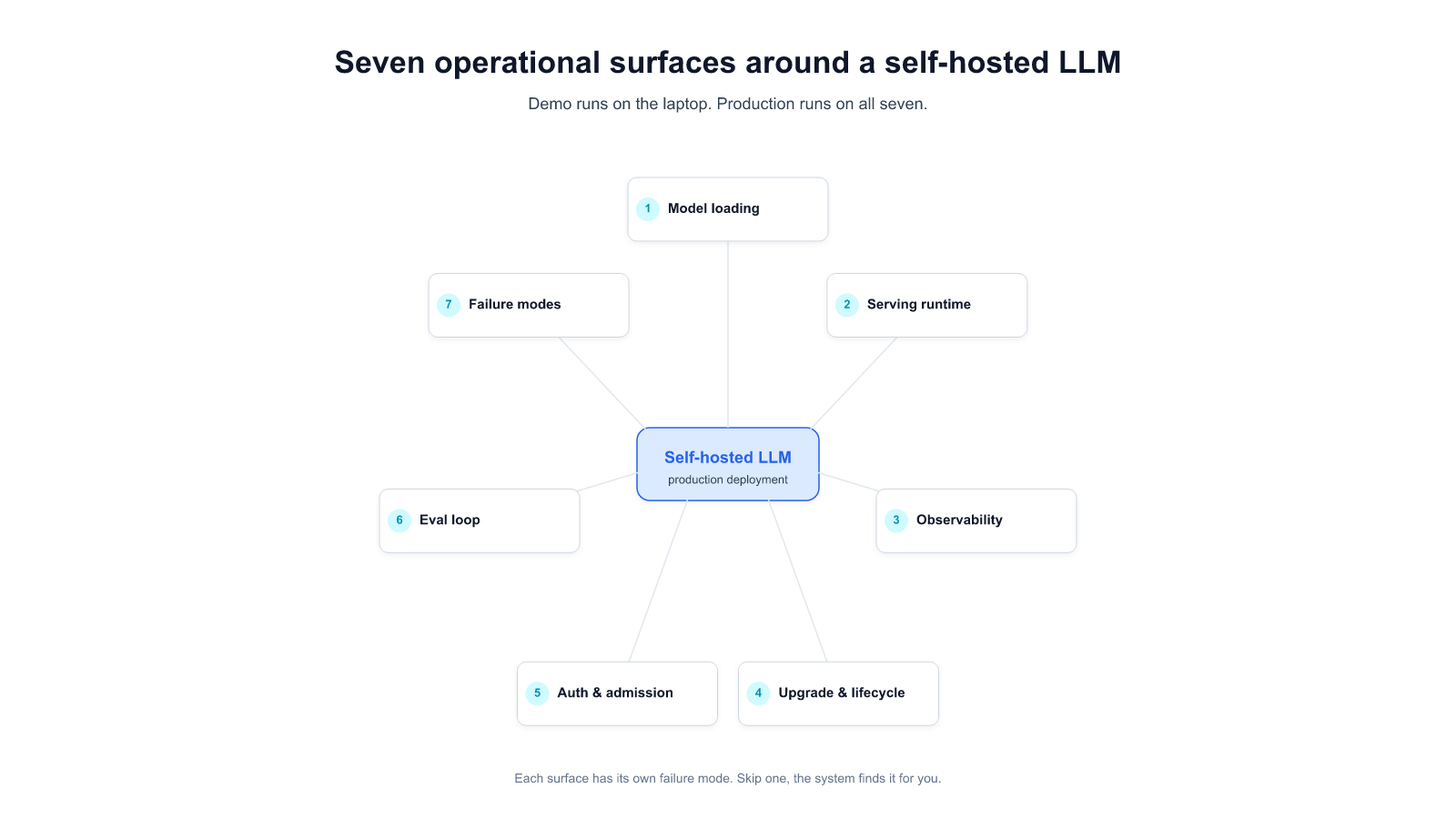

Before the checklist, the mental model. A self-hosted LLM is not a single service. It's seven surfaces you have to decide for, configure, monitor, and own on an ongoing basis:

Each gets a section below with the minimum-viable production bar, the failure mode we've most often seen, and the question to ask yourself before you declare it done.

What a demo hides: the demo loads a model once, it stays loaded, nobody asks where it came from. Production has to answer three questions the demo doesn't:

meta-llama/Llama-3.1-70B-Instruct without a revision SHA means the next push from the model owner rolls into your production. Pin to commit SHAs in your deployment manifest. Treat weight artifacts as immutable.Most-seen failure mode: registry capacity. We've watched a team's first production upload of a larger model fail silently because the default object-store bucket had throughput quotas sized for typical CI artifacts, not for 140 GB writes. One engineer-day to diagnose and reconfigure. Recurs on every model upgrade unless you fix the registry architecture, not just the specific upload (see [[023]] Incident 3 for the anonymized long version).

Done-check: can you pull the currently-deployed weights, byte-identical, from two geographically-separated caches without any internet egress? If yes, Surface 1 is production-grade.

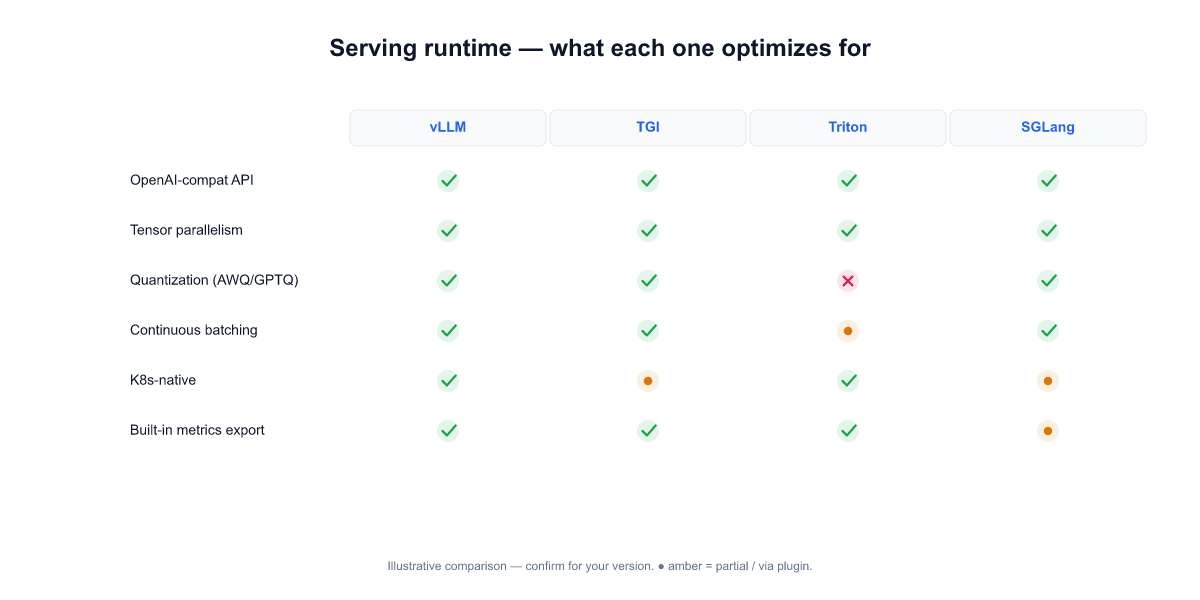

The serving-runtime decision gets the most air-time in community discussions and matters less than it looks. The real question is not "vLLM vs TGI vs Triton vs SGLang" — it's "which one gives us observability surfaces and operational hooks we can own?" The runtimes have converged on similar throughput in most shapes; they haven't converged on how much of their internal state is inspectable and scriptable.

Short guide for the enterprise choice:

Most-seen failure mode: configuration drift, not runtime choice. The default vllm serve config is tuned for a common case that rarely matches a specific enterprise's hardware and workload mixture. tensor-parallel-size, gpu-memory-utilization, max-num-seqs, and kv-cache-dtype are the four knobs where we've seen the biggest production-vs-default deltas. On a B200 cluster replacing an H200 fleet, leaving these at defaults produced measurably slower serving than the older hardware — a regression that took engineer-days to tune back out (see [[023]] Incident 1).

Done-check: do you have a per-hardware-class config matrix checked into source control — "H100 × 2 for Llama-3.1-70B-Instruct looks like this; B200 × 4 for the same model looks like that" — with benchmarks that rerun on every hardware change? If yes, Surface 2 is production-grade.

The most common production lesson across the on-prem deployments we've watched: teams that instrumented before the first incident recovered in minutes; teams that instrumented after spent days reconstructing the incident from user complaints and GPU fan noise.

Minimum-viable production metrics — pull these as Prometheus series on day one, not week three:

nvidia-smi dmon -s umct in a scraper is enough to start.Dashboards that actually get used: one "is the cluster healthy right now" board (4-6 panels, everyone glances at), and one "how did this specific incident unfold" board (request timeline + GPU timeline + queue timeline, overlapping axes). A third "quality metrics" board belongs to Surface 6 (eval loop), not here.

Most-seen failure mode: production teams wire metrics for serving but not for quality. The cluster is healthy — latency is green, queue is empty, GPUs are happy — and yet the answers getting returned have gotten worse after a recent model refresh or parser upgrade. Nobody notices for weeks because no dashboard is looking. The fix is to make Surface 6 (eval) a first-class production citizen, not just a pre-deploy check.

Done-check: when your team's oncall gets paged at 3 AM, can they tell within 10 minutes whether the root cause is hardware, serving-runtime config, model weights, input-traffic shape, or retrieval corpus — without rerunning user queries? If yes, Surface 3 is production-grade.

Every base model release, every serving-runtime minor version, every CUDA point release is a potential regression. Most enterprise deployments discover this the hard way on their first post-launch upgrade cycle — roughly three to six months in, exactly when the initial production team has moved on to other projects and nobody owns "LLM serving" end-to-end anymore.

The production bars:

Most-seen failure mode: upstream dependency upgrades shipped as part of a routine minor version bump that introduce non-obvious behavior changes. A parser minor-version upgrade added a previously-undefined content type; the schema downstream of it rejected everything with the new type; documents with charts silently stopped indexing for weeks before anyone noticed. The parser upgrade passed the pre-deploy checks because the checks were runtime-level, not content-level (see [[023]] Incident 2 for the anonymized detail).

Done-check: can you take a fresh clone of your deployment repo, a fresh CI runner, and reproduce today's production exactly — right down to the weight SHA, runtime image SHA, CUDA version, and parser schema — without any manual steps? If yes, Surface 4 is production-grade.

The part that usually gets postponed until the security team's first audit conversation. That conversation goes better if the answer to "who can call this API, how is it logged, and what happens when usage spikes or something looks abusive?" is on a page rather than a promise.

Production bars:

Most-seen failure mode: the gateway lets requests through on a static API key because that was the fastest way to ship the first demo, and the rotation-to-SSO migration gets deprioritized indefinitely. When the first security incident happens (not if), the postmortem cannot attribute the incident to a user, because logs only have "api-key-v1" in them. We've seen this cause 2-3 weeks of cross-team effort to retroactively propagate identity.

Done-check: pick any inference request from the last 30 days. Can you answer — from logs alone — who made it, under what team's quota, what corpus it grounded against, what tools it called? If yes, Surface 5 is production-grade.

Every other surface protects uptime and serving-correctness. This one protects answer-correctness — the thing your users actually care about. And it's the one enterprise teams are most likely to under-invest in because its ROI is only visible when a regression doesn't ship.

The production bar:

Most-seen failure mode: the eval dataset exists, someone built it once, nobody is responsible for maintaining it. Over six months, it drifts out of alignment with what users actually ask, and the automated runs start passing on an out-of-date suite while real users start hitting regressions. The failure isn't technical — it's organizational, and the fix is naming an owner.

Done-check: for a random weight upgrade that your team ran in the last month, can you point at the pre-upgrade eval result, the post-upgrade eval result, and the delta by task category — without running anything manually? If yes, Surface 6 is production-grade.

Every production system has a tail of incidents that don't fit the other six surfaces. Write them down before they happen. The runbook patterns that matter most for self-hosted LLM at enterprise scale:

Most of these runbooks are short — half a page each. What matters is they exist, they're owned by a named team, and the conditions that trigger each are alertable rather than discovered via user complaint.

Most-seen failure mode: the team has runbooks for classical infra incidents (disk full, pod restarts) but no LLM-specific runbooks. The first LLM-specific incident — a prompt injection, a quantization regression, a tokenizer drift — triggers a 6-hour panic because nobody has done this before. The fix is spending two weeks writing the runbooks before the first incident, using OWASP LLM Top 10 and the eval dataset failure modes as a starting point.

Done-check: pick any of the 5 failure modes above. Can someone on your on-call rotation, paged at 3 AM, resolve it by following a written runbook without needing to ping the senior engineer who built the system? If yes, Surface 7 is production-grade.

Copy-paste onto your team's production-readiness doc. Each item maps back to a surface above.

Model loading - [ ] Weights served from local OCI-compatible registry with integrity verification - [ ] All model references pinned to commit SHAs in deployment manifest - [ ] Resumable downloads with checksummed local caches

Serving runtime - [ ] Per-hardware-class config matrix in source control - [ ] Automated benchmark rerun on every hardware change - [ ] Runtime version pinned in deployment manifest

Observability - [ ] Token / queue / resource / request-level metrics on day 1 - [ ] "Cluster health" dashboard + "incident timeline" dashboard - [ ] Request-level sampling with structured logs

Upgrade lifecycle - [ ] Percentage-routed canary with automatic rollback - [ ] Per-release eval gate blocks single-digit-percent quality regressions - [ ] Weight SHA + runtime image SHA pinned in manifest - [ ] Rollback runbook with named time budget

Auth + admission - [ ] Corporate SSO in front of inference API - [ ] Per-identity quotas at the gateway - [ ] Max prompt / max output / max tool-chain depth limits - [ ] Audit logging with retention matched to sector regulation

Eval loop - [ ] Task-specific dataset (50-500 real queries) - [ ] Automated run on every weight / runtime / corpus change - [ ] Quality dashboard in the same rotation as serving metrics - [ ] Weekly failure-case review with named owner

Failure modes + runbooks - [ ] Runbook for GPU OOM / kernel miscompile / prompt injection / tokenizer drift / silent crash - [ ] Each runbook owned by a named team - [ ] Alertable conditions, not user-complaint discovery

A capable platform team hitting this checklist from scratch takes 8-16 weeks for a first production cluster. Teams that try to short-circuit it almost always spend the same time after launch, on incident response. The trade isn't shorter vs longer — it's planned vs unplanned.

If your team is moving an LLM to self-hosted production and wants to pressure-test your checklist against this one — or just talk through the hard parts — we do these conversations.

Start a conversation — share your hardware, workload shape, and compliance constraints. We'll come back with where on the 7 surfaces we think you'll hit the biggest friction.

Start a 14-day trial — run Alli Coworker on your own hardware (or a representative staging cluster) before you commit.

Lorem ipsum dolor sit amet, consectetur adipiscing Aliquam pellentesque arcu sed felis maximus

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Stay updated with the latest in AI advancements, insights, and stories.