What actually happens when you deploy AI in enterprise environments? Allganize field engineers share three real cases: infrastructure failures after a security patch, multi-site deployment outages from configuration drift, and PII masking architecture redesigns in air-gapped networks.

What PoCs don't show you, and how we solved it in the field

We delivered a RAG-based AI agent to a large public energy utility, Company A. A conversational app that searches internal documents and answers questions. Everything worked fine through the PoC.

The problems started once it hit the production environment. Every time a user opened the app, a "Loading" screen hung there. We measured it: 15 seconds to first response, every single time.

Why 15 seconds matters: according to NN/g (Nielsen Norman Group) response time research, users lose the ability to maintain attention after 10 seconds (Jakob Nielsen, "Response Times: The 3 Important Limits"). Fifteen seconds is 1.5x that threshold. This isn't a minor UX annoyance. It determines whether frontline employees actually use the app or abandon it.

So we started tracing. But this client ran an air-gapped network. No OpenTelemetry. No Jaeger. LangSmith and Datadog were obviously out of the question. All we could do was manually instrument timing logs section by section and eyeball where the time was going. The result: bind_tools(), the step where tools get wired to the LLM. That's where the 15 seconds lived.

When we shared this in our internal product team channel, the reaction was... skeptical.

"I find it hard to believe that code path is causing the slowdown... what made you think so?"

A senior engineer wasn't buying it. Another engineer said, "I noticed the lag too, but I think it's the stage before graph entry, not bind_tools."

Then a third engineer jumped in:

"Has anyone checked for a bottleneck when creating or entering the agent app? I have a hunch."

We dug deeper. bind_tools itself wasn't the problem. MCP Hub, the central hub that manages agent tools, was failing to connect. With the connection dead, the system sat there waiting for the timeout to expire. Fifteen seconds later, it finally moved on.

[WARN] mcp-hub connection timeout after 15000ms — falling back to default tool registry

The product team senior nailed it:

"If you're seeing a consistent delay of exactly 15 seconds, something is trying to connect somewhere, hitting a 15-second timeout, and only proceeding after that."

On the cloud, a monitoring dashboard would have caught the connection failure instantly. On an air-gapped network, that wasn't an option. It took a field engineer manually planting logs and ping-ponging with the product team in a channel to narrow it down. The fix: tuning Helm values for external dependency timeouts (timeoutSeconds), retry intervals (backoffSeconds), and pre-connection on startup (initOnStart). Validation is underway.

A 15-second delay in the bind_tools step didn't mean bind_tools was slow. MCP Hub connectivity had failed, and figuring that out required three teams (field, product, infrastructure) putting their heads together in a channel.

This wasn't an isolated case. Industry-wide, McKinsey's annual survey (The state of AI in 2025, conducted June–July 2025) found that 88% of responding organizations had adopted AI in at least one business function, up 10 percentage points from 78% the previous year. But only a third said they were scaling AI across the organization. Gartner projected that more than 30% of generative AI projects would be abandoned after PoC by end of 2025 (Gartner Data & Analytics Summit, 2024).

Adoption is fast. Operations is where things stall. Company A's 15-second delay was one of many patterns we encountered. The three cases that follow cover what our engineering team ran into while building on-premises AI in the field: an infrastructure outage after a security patch, a multi-site deployment failure, and a PII masking architecture redesign.

(The cases below are based on recurring patterns from real production environments. Customer and personnel names have been anonymized. Technical root cause analyses are drawn from our engineering team's internal incident reports. Metrics and environmental conditions are specific to the respective customer deployments.)

Public institution B. Running an AI system on a 5-node MicroK8s cluster with 3 control plane nodes in HA configuration.

One afternoon, they applied settings from a security audit and rebooted. With managed Kubernetes (EKS, GKE), much of the control plane operations burden shifts to the vendor. On-premises, you own all of it.

After the reboot, the API server stopped responding.

According to the internal postmortem, the issue originated during leader election in dqlite, MicroK8s' built-in distributed consensus database. The most likely cause: time synchronization drifted after the reboot, or the security settings blocked the NTP path entirely.

They tightened security. It killed the infrastructure.

At 3:35 PM, the lead field engineer reported the outage. The remote support team started peeling back security settings one by one to narrow it down, but remote access wasn't enough. An engineer drove to the site.

Recovery went like this:

Afterward, the engineer wrote a troubleshooting report and drafted Helm chart improvements. The resulting security configuration document was later applied as a baseline for other customers.

Setting up K8s HA doesn't mean you're safe. A single security patch can break distributed consensus, and when that happens, remote won't cut it. Someone has to physically go there. MTTR stretches. SLA defense costs go up.

Financial group A. Three subsidiaries (a savings bank, a holding company, and a capital firm) each running the same AI system on their own air-gapped networks. Deployments were pushed centrally: one verified image, pushed to all three networks at once. A key component in the stack was the document parsing engine, the piece that converts uploaded documents into a format the AI can read.

The team deployed the same image across all three networks in the early morning hours. The parsing engine failed to start in two of them.

First instinct was GPU. NVIDIA drivers, CUDA versions, container runtime. In on-premises GPU environments, these are the usual suspects, so looking there first made sense. But the actual cause was somewhere else entirely. A frontend update had introduced a version mismatch in the API path where the document intelligence module calls the parsing engine. The same image was deployed everywhere, but the existing frontend versions differed across networks, so only some broke.

One component was touched. A dependency on another component broke. It wasn't an image problem. It was Configuration Drift, the gradual divergence of environment settings across networks.

Starting from midnight the night before, an engineer began remote debugging. They compared configurations between the one healthy network and the two broken ones, shared model loading log commands and curl test snippets for the staging environment, and gradually narrowed the scope. Rollback and redeployment restored service sequentially.

Canary deployments or blue-green strategies reduce this risk. But air-gapped networks have strict network segmentation, limited spare resources, and it's hard to stand up a separate validation environment. When things break at 3 AM, the gap between detection and response stretches even further.

That covers infrastructure and deployment. What comes next is security, and it doesn't get easier.

Public institution B, the same one whose cluster went down in Problem 1. This time, the issues hit at both the network boundary and the data boundary simultaneously.

Network side first. A web vulnerability assessment flagged a structure where three networks shared a single domain as a security violation. The resolution, splitting the domain while keeping the authentication scheme intact, was negotiated with the customer and cleared.

The data side was harder. When the AI agent invoked tools, a path was identified where national ID numbers, foreigner registration numbers, passport numbers, and driver's license numbers could be exposed in tool input/output.

South Korea's Financial Security Institute published AI Security Guidelines for the Financial Sector in 2023, which prescribes security checkpoints for AI service input/output data. Article 29 of the Personal Information Protection Act (analogous to GDPR's Article 32) provides the legal basis for safety measures. "Just turn on the masking feature" doesn't begin to cover it.

The engineering team designed a three-layer architecture:

The third layer is the critical design choice. Running LLM guardrails on every single request is safer, but it means an extra LLM inference per request, and the latency hit is significant. This customer chose to filter aggressively with regex on the first pass and restrict LLM inspection to high-risk requests only.

There are limits, of course. Free-text personal information and sensitive data without clear patterns slip past regex. That's why they run periodic sampling audits and supplementary checks on high-risk requests in parallel.

Flipping a feature toggle isn't enough. You're redesigning from domain architecture to data flow to the balance between security and performance, all at once.

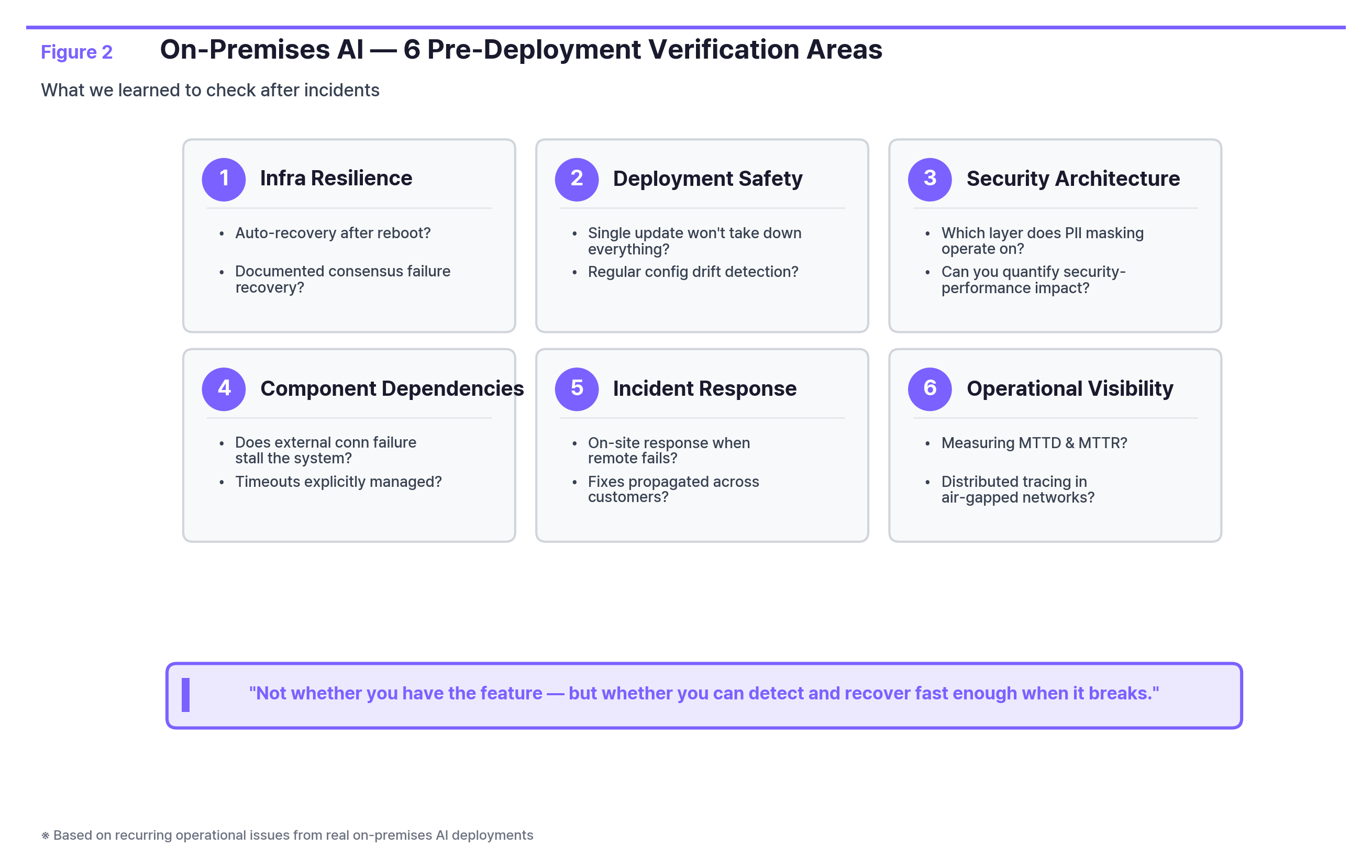

A set of questions cuts across all three cases.

The point of these questions isn't "do you have this feature or not." It's whether, when something actually breaks in production, you can detect it fast enough and recover fast enough.

There's a trade-off you can't avoid. Attach an LLM inspection to every request and you gain safety, but every request pays an inference cost and added latency. Cut the inspections and things speed up, but gaps appear.

Take PII masking as an example:

ApproachSecurity LevelPerformance ImpactImplementation ComplexityLLM guardrails (inspect every request)High+1–2s per requestMediumRegex pattern matchingMedium (misses non-patterned data)< 10msLowHybrid (regex + guardrails for high-risk only)Medium-highNormal < 10ms · High-risk +1–2sHigh

※ Single GPU node, 8B-class model, avg. 500-token request baseline. Varies by model size, token count, hardware, and concurrent request volume.

Public institution B chose the third option. Regex filters catch legally defined identifiers on the first pass; LLM guardrails apply only to high-risk requests. It was a joint decision between the security team, product team, and infrastructure team. Not the kind of call any single team makes alone.

This trade-off exists on the cloud too. But on-premises, GPU capacity is fixed, scaling is hard, and changing the network architecture isn't something you do casually, so once you choose, reversing course is significantly harder.

If you're considering deploying AI in the enterprise, whether with a vendor or your internal team, these are worth asking:

The vendors who can answer these concretely are the ones who've lived through the gap between PoC and production.

This article covered how different PoC and production really are: across infrastructure, deployment, and security.

The next installment goes deeper into the PII masking trade-off: the architecture, the layers, the cost. How teams resolved the collision between GPU resources and security requirements, and why security reviews never really end.

The cases in this article are drawn from the operational experience of Allganize's on-premises AI engineering team. All customer names and personnel have been anonymized.

Sources

Lorem ipsum dolor sit amet, consectetur adipiscing Aliquam pellentesque arcu sed felis maximus

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Curabitur maximus quam malesuada est pellentesque rhoncus.

Maecenas et urna purus. Aliquam sagittis diam id semper tristique.

Stay updated with the latest in AI advancements, insights, and stories.